In my last post, I wrote about my frustrations as a Haskell user on Windows. It led to a good discussion on Reddit about a number of points I raised, as well as some good comments on this site. I received more than one recommendation to try out F# as an alternative to Haskell. I’ve been casually exploring it for a few weeks now, and I have decided not to pursue it. My impressions are below. Keep in mind that I am coming to F# as a barely experienced Haskell user, but a Haskell user nonetheless. My impressions are also restricted to F# in Windows, as I did not investigate Mono or other Unix tools.

F# – The Good

Full citizen status – Most immediately noticeable when coming to F# from Haskell is that Windows users come first. You do not need to install any Unixy tool chains to build what you want. In fact, almost everything you could possibly want is downloadable straight from Microsoft’s enormous and comprehensive .Net framework. Everything just works.

Documentation – Being part of the .Net framework, F# has a lot of corporate resources behind it at MS. This includes in depth documentation about nearly every .Net component. What is more, the .Net community is enormous, so finding examples online for pretty much anything is much easier than it is for Haskell.

Advanced tooling – One of the things Haskell suffers from, in my opinion, is lack of advanced development tools, including a standard and full-featured IDE. In F#, you get Visual Studio, a fully featured IDE that has been tested by thousands, if not millions. Haskell has no equivalent. Leksah is decent, but it is nowhere near as capable as VS.

For example, in F# on VS you can scroll over (almost) any value or function and see the inferred type signature for it. In Haskell, if I have a tough type error, I usually have to start explicitly defining the types of values and functions to narrow down the scope of my mistake. In VS, I can just scroll over the item in question and immediately get hints as to where I went wrong. Unfortunately, as far as I know this only works for named functions and not functions defined as symbols (operators).

Sane strings – Haskell’s default implementation of strings is a linked list of characters. While providing some advantages, in most cases it’s plain inefficient and unusable. GHC has some extensions to help fix this, such as the OverloadedStrings extension, and there are libraries to help as well, such as the text library. But different libraries choose to handle strings in different ways, so you often find yourself calling a number of conversion operations just to glue all the right types together. F#, on the other hand, has one standard string implementation that everyone uses.

Standards – Somewhat similar to the previous point, the Haskell ecosystem sometimes feels like the wild west, with different projects experimenting with types and strategies. Frequently, this can lead to ambiguity in how a newbie should approach a particular problem. In F#, there is usually a standard, recommended way to do things – the .Net way.

Computation expressions – This is a feature in F# similar to monads that is in some ways less powerful (see below) and in some ways more powerful. Like do notation, CE’s are syntactic sugar for monad-like operations. The familiar Bind and Return are present, like Haskell, but F# allows additional desugaring of loop expressions, the ability to implement lazy evaluation, and more.

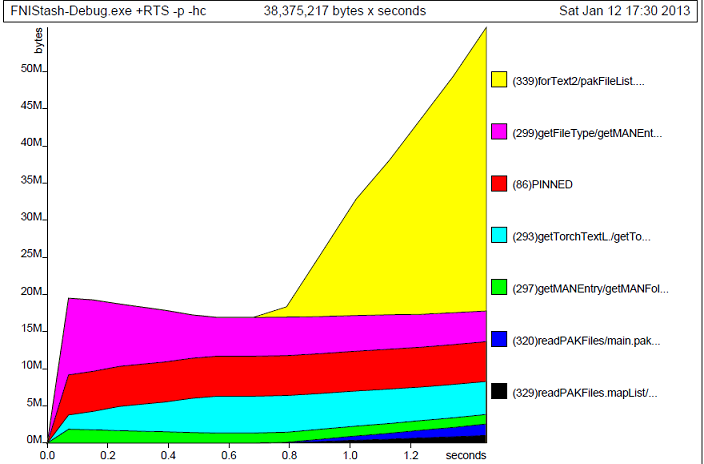

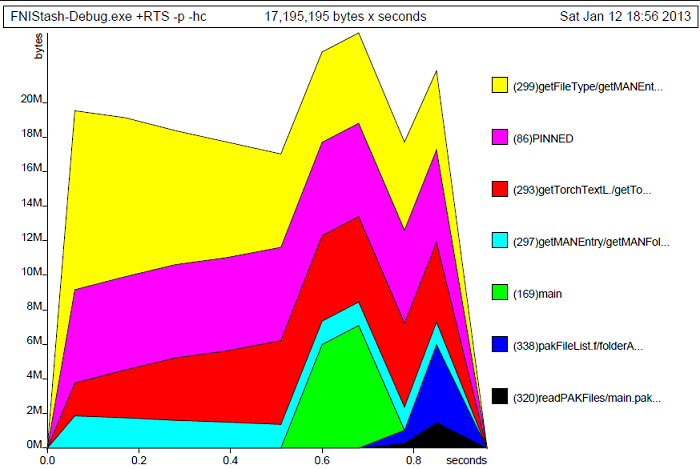

No lazy evaluation (unless you want it) – Arguably a con overall, but my point here is that reasoning about performance and space usage is easier in F# due to strict evaluation semantics. The profiling utilities built into VS make this even easier still.

F# – The Bad

Verbose and odd syntax – A number of aspects of F# rub me the wrong way.

First, every declaration requires a let keyword. Let let let let let. Haskell in a lot of ways is much more concise because several values can be declared using one let.

Secondly (and this drives me nuts), F# function and type declarations must be made before the function is used. In Haskell, I often use a few helper functions that are defined down near the bottom of the file, but in F# those helpers must be defined near the top of else they can’t be used. Having such an antiquated restriction as this in a language a modern as F# boggles my mind.

Thirdly, the declaration of types and their constructors is sometimes not consistent. For instance, a constructed tuple in F# is (a,b) (like Haskell), but the type of this tuple is c * d. It’s not a hard thing to get over, but it does accentuate how self-consistent Haskell is.

No type classes! – I put an exclamation point on this one because it might be the single most important reason F# left a bad taste in my mouth. I did not realize the power of type classes until I tried to do functional programming without them. Take for example the monad type class. If a type is an instance of the monad type class, it is a given that it will work with replicateM, sequence, and a number of other functions that work on monads. If you have a new type, just declare a monad instance for it and sequence will work with it as well.

Trying to do this in F# is an exercise in frustration. There are ways to simulate type classes, but you have to do all kinds of inlining and type gymnastics to make the .Net CLR happy. Since it’s not readily supported by the language, there is no standard library of monad functions, and in a lot of situations you’ll have to reimplement these extraordinarily useful utilities yourself. This can lead to a good deal of code duplication. Just check out the FSharpx monad library code for examples.

Dependence on .Net – .Net is a comprehensive, full-featured framework, but it is huge and designed for OO languages. This results in classes like Stream, StreamReader, BinaryReader, TextReader, etc., which are all slight variations on each other. F# uses these same classes. Digging through the docs to find what you need can be challenge, but less so if you’re already familiar with .Net. In Haskell though, almost all of these can be replaced by mapping to/from a plain old byte string. Why does OO have so much seemingly unnecessary complexity to simply deserialize a type?

“Functional first” – F# is often described as a “functional first” language, meaning it supports both functional and OO programming paradigms. For some this is a plus (right tool for the right job and all that), but to me it seems like F# suffers from split personality disorder. Some of the F# libraries feel like little more than functional wrappers around an OO core. For a newbie, it’s not entire clear if these are merely measures of convenience or of necessity.

Back to Haskell

I doubt I was able to completely commit my thoughts on F# to this page, but this is at least a good start. I’ve decided to continue to wrestle with Haskell on Windows because even though the ecosystem is a house of cards, actually coding in Haskell is much more enjoyable than F#. I’ve started over with Haskell Platform to hopefully resolve as many compatibility issues as i can.